If you thought Google’s I/O conference this year was going to be all about hardware, think again. That’s what last week’s announcement of the Pixel 8a was all about, which is why there were so many reviews of the Pixel 8a this week.

Last week got the hardware out of the way. Google is mostly a software and search company, and so this week is all about what those plans for software and search will look like.

And my, there are a lot of them.

“Google is fully in our Gemini era,” wrote Sundar Pichai, CEO of Google and Alphabet on the Google Blog.

For those unaware, Gemini is Google’s artificial intelligence system, positioned as a competitor to Open AI’s ChatGPT and Meta’s Llama, the latter of which is beginning to appear in Facebook, Messenger, Instagram, and WhatsApp. It can be divisive, but it’s a part of those apps now, and Google’s AI is about to be a apart of not just how you search, but how you use Google’s products in general.

Interestingly, you might not get a choice in the matter, either.

In a story that’s clearly still evolving — because Google I/O 2024 is on for the next few days — Google is outlining how its products will change, and it’s a pretty expansive list.

“We want everyone to benefit from what Gemini can do. So we’ve worked quickly to share these advances with all of you,” said Pichai.

Search

Let’s start with a big one: search.

That’s what Google has been known for, and what brought Google to life back in the late 90s. Search is constantly evolving, though in recent months, Google Search has felt weird, almost that you’ve been unable to find what you’re looking for.

In Google’s world of tweaks to its search capabilities, the big G has been applying updates that have practically decimated and removed websites from searches on what they write about, noting throughout the process that there are ways to recover.

While little of that seems to be happening, and Google isn’t quite addressing how some of these sites are now apparently unhelpful in its “Helpful Content Update”, the company is pushing on with how search will evolve. Hopefully that means more than just pushing Reddit to the top of searches, a situation that happened shortly after to train its AI on the site and service. Convenient.

Using some of that data, and no doubt the data Google has learned by reading and comparing every webpage for almost three decades, Google Search will expand its use of AI by providing summaries of search from text and video searches.

The video above gives an overview of what search is trying to evolve into, but the crux of it boils down to a sense of immediacy with answers where search results become information, as opposed to websites you click.

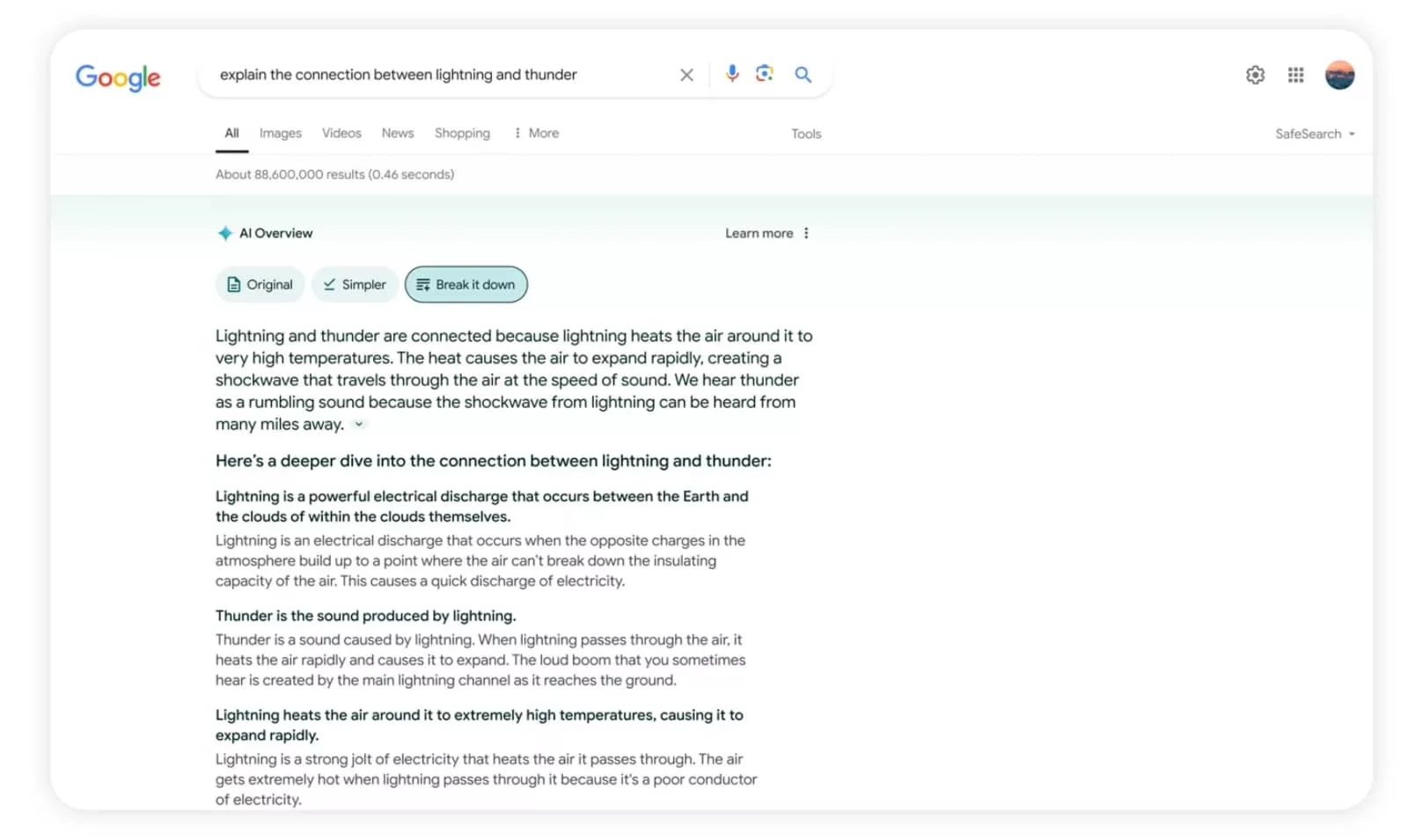

AI Overview appears to be Google’s evolution of the Search Generative Experience, an experimental AI feature that has been in testing mostly in the US and did exactly this for searches that it affected.

Essentially, you’ll be able to ask questions and have Google provide a summary of the answer distilled from websites that you won’t have to visit, with the information all found in the one page.

Not every bit of information may be a good one — there’s an example in the video above suggesting opening the back of a camera while film is inside, which would undoubtedly expose the film and ruin your images — but hopefully AI can improve and weed out bad results.

On the one hand, that could be great for people using search, but the other seems like an odd situation for the creators writing content for webpages.

In that situation, we’re not entirely sure how Google is providing encouragement that they keep on writing, largely if their content is being distilled for a search system that has no intent on having users come back to them in the first place. It’s almost as if Google is suggesting creators should keep on creating, and Google will keep on summarising, even if users may see less of the source material.

With that in mind, we doubt the AI Overviews will appear for every search, but they may appear for many, especially if there’s something AI thinks it can solve or assist with. This may come down to the intent of the search, something that Google can qualify.

Interestingly, you will be able to tweak your AI Overviews, with various levels of language simplification, though you might not be able to turn it off completely.

However, Google’s Search Liaison — essentially a spokesperson for Google Search that you can write to and hopefully will respond — has noted there is also a new “Web” filter for search, that will deliver a regular page of search results, not just more AI.

In terms of rollouts, Google’s AI Overviews will likely launch in Australia soon, but will see US users in its grips first, before rolling out to other countries later on.

Android 15

Outside of Google Search, Android is arguably google’s biggest product.

It’s one of the two big mobile operating systems, between it and Apple’s iOS, and it powers a little over half of the phones in Australia. That’s a lot, and you can apply some of that logic to other parts of the world, too.

Google I/O has long been a place where the next version of Android is announced, and shock horror, that’s what’s happening this week, too. Understandably, AI is a big focus, as Google integrates AI and Android.

Circle to Search is already a part of new Android phones, including the Pixel phones and the Galaxy S24 range it launched on, but AI will go deeper in Android, as Gemini improves the Google Assistant.

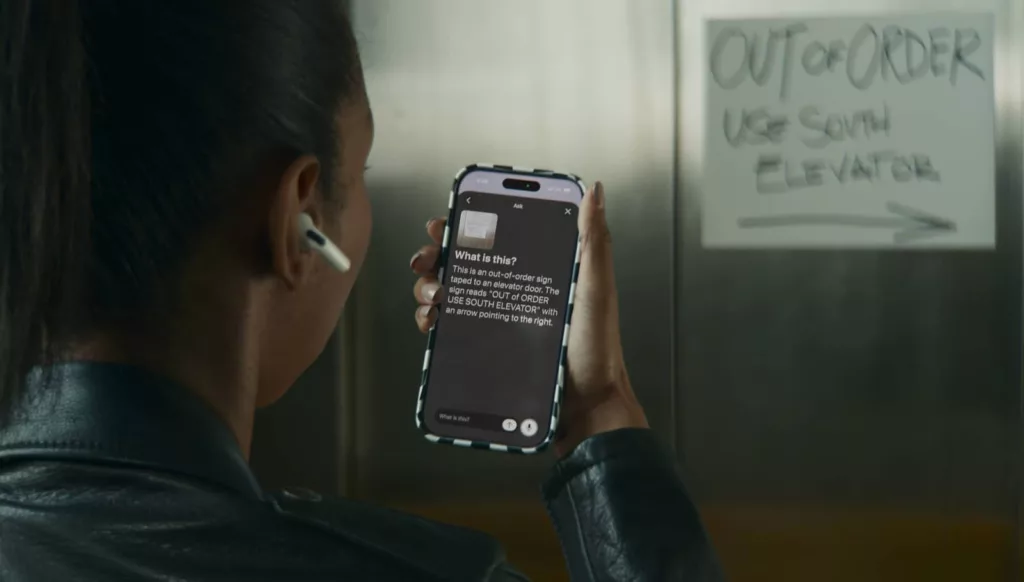

New versions of Android will include an on-device AI model with Gemini Nano, a system that will be able to understand what’s on your phone, the information you use on your phone, and be able to talk to it while also having the AI talk back in conversation. This approach is called “multimodality”, which means processing and dealing with the context of your words and conversation, and being able to relay that information to you. Almost like a human can, except in AI.

You won’t likely be talking to your phone all the time, but Gemini Nano could help people who do, such as with accessibility situations in low vision users. TalkBack with multimodal capability will mean more information can be relayed about what’s on-screen than just what the object is.

And that’s not all.

Google’s Gemini Nano model also has the ability to get in the way of phone call scams, providing real-time alerts if a conversation pattern matches that of a scam.

Google hasn’t noted how this will work, or even which phones it will come to first, but has said its scam detection alerts will be opt-in, with more information to come later this year.

Photos

If you’re an Android user, or just someone who uses a Google Nest Hub to show photos of the family, you probably use Google Photos. It’s one of the many storage options for folks who have photos.

Pretty soon, AI will impact that platform, too, as Google rolls out more of its Gemini technology to its photo storage app and service.

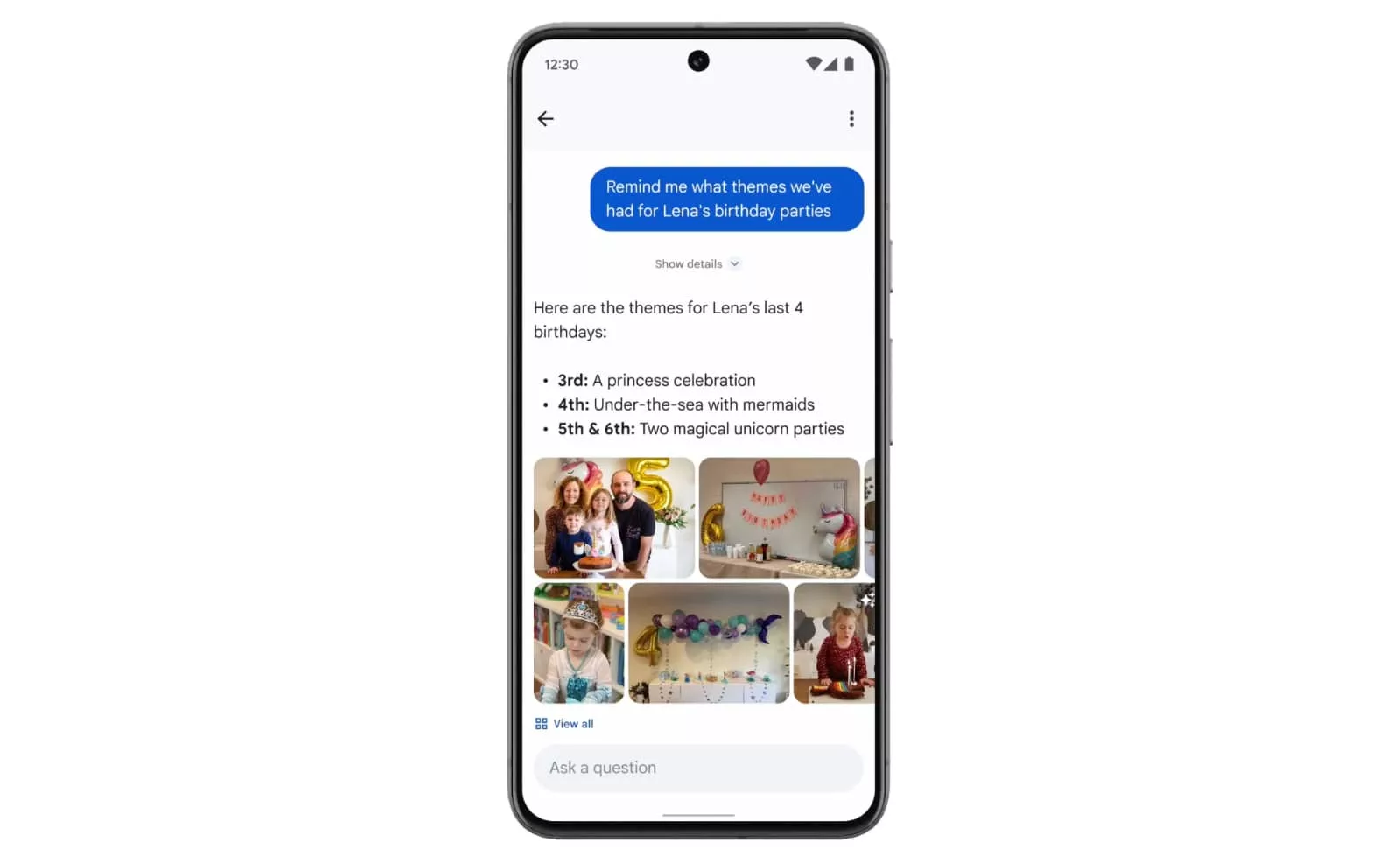

“Ask Photos” will essentially allow you to ask questions about your photo library, using the information in your library about people and places, and joining the dots from questions and queries.

The themes in your photos could be translated, or the best photos you have, or just simply finding when you visited somewhere with information.

It’s a little like the AI Overviews search feature, but applied to your photo library, and it’s coming soon to Google Photos users.

Homework

Circle to Search may have provided an evolution for folks to search, but it also aims to solve problems, too.

As part how the system works, you’ll be able to circle a maths problem and get answers to how you solve it, thanks in part to an AI model built to explain educational problems and help with research.

Content creation

Even folks building and creating might be able to tap into Google to get more done.

While it’s not quite Adobe’s Firefly AI or even Midjourney’s image creation tech, Google is experimenting with content creation tools as part of its Labs.Google projects.

That will include a video creation system called “VideoFX”, an improved image making model called “ImageFX”, and one that will let you seemingly make sound out of nothing called “MusicFX”, which more of these experiments to come.

There are a lot of improvements to AI models in general, it seems, not just to search but to how Google makes videos and music out of nothing more than mere text, and a lot of information to digest.

Improvements to AI

Last but not least, Google’s AI is improving with various models that more business-minded and developer folks might want to use. Various model improvements are on the way for different versions of Gemini, handy for folks who want to integrate Google’s technologies into their own.