Train a computer to do something, and it can end up doing some pretty cool things. That happens under the hood of many apps these days, cleaning up photos and videos and all sorts of things, even trying to take the pain away from the constant push of scams calling you up and coming your way, but it can even make life easier for some people, too.

That’s the message coming out of Apple this week, which is talking up some changes to its products ahead of Global Accessibility Awareness Day on May 19, and how the company is employing artificial intelligence and machine learning to make its gadgets help.

For instance, Apple’s VoiceOver screen reader is getting new languages to cover Ukrainian and Vietnamese, among others, and they’ll be able to work to pick up on spelling and proofreading thanks to a new Text Checker tool coming to Mac.

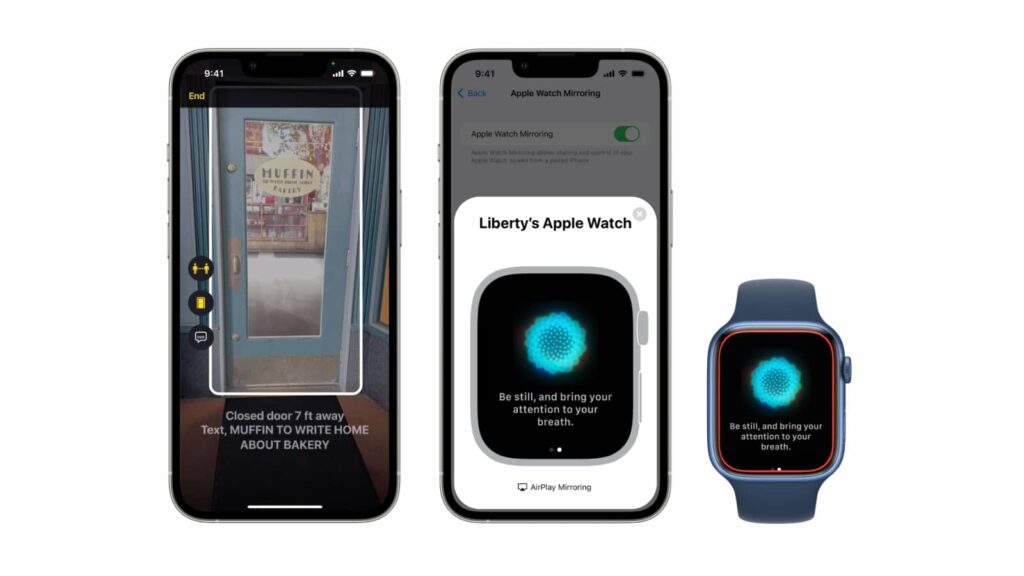

Recent premium iPhone and iPad models with the LiDAR Scanner at the back will also help out others in a slightly different way, telling them where doors are. If you have an iPhone 12 Pro, iPhone 12 Pro Max, iPhone 13 Pro, iPhone 13 Pro Max, or an iPad Pro with the large camera block at the back, you have access to the LiDAR Scanner, which is a special camera that picks up on objects and shapes for use in augmented reality applications.

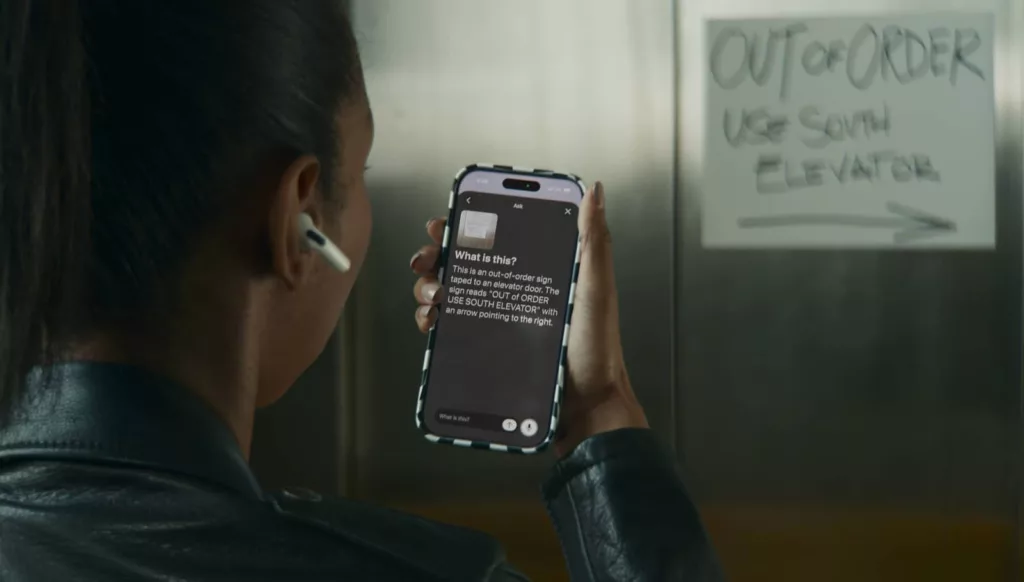

It’s this technology that Apple is using with a feature it calls “Door Detection”, with the feature essentially able to tell a phone’s owner whether a nearby door is open or closed, and whether there’s a handle that can be pushed or turned, using machine learning to bring all of this together. It can even pick up on signs and numbers to help provide a sort of in-person navigation system for people who can’t see what they’re walking to.

Door Detection is coming to Apple’s Detection Mode in Magnifier, an app for people with vision issues and may be blind, and can work with VoiceOver to provide walking directions, too.

“Apple embeds accessibility into every aspect of our work, and we are committed to designing the best products and services for everyone,” said Sarah Herrlinger, Senior Director of Accessibility Policy and Initiatives at Apple.

“We’re excited to introduce these new features, which combine innovation and creativity from teams across Apple to give users more options to use our products in ways that best suit their needs and lives,” she said.

Those features will arrive alongside some more controls for Apple Watch owners, with Voice Control and Switch Control allowing Apple Watch features to be triggered by the voice control on an iPhone, allowing features found on the Apple Watch Series 7 — like blood oxygen and heart rate tracking — to be triggered by voice.

At the same time, gestures are coming to Apple Watch to trigger phone call actions, to pause and play music, and to control workouts, as well.

And there’s also one more feature Apple users gain, though not yet in Australia: Live Captions.

Using machine learning and the ability to understand languages, Apple is testing something you might have seen on YouTube and Android previously, with automatic live captions, though this will appear practically anywhere.

Apple’s addition of Live Captions is coming to iPhone, iPad, and Mac, and while it will work on content you play in your browser or on social media, Apple also suggests it will work on FaceTime calls, as well, allowing the deaf and people who are hard of hearing to read the room quite literally, reading the output of a call as it happens.

At the moment, Live Captions appears to be only available in the US and Canada, but we’re checking with Apple in Australia to find out when this is coming locally. It could very much be a situation similar to how Sonos Voice isn’t ready for Australian voices and accents just yet, and Apple’s accessibility team may need more time tweaking how its AI and machine learning counterparts work with Australian voices until we see the feature locally.

For now, that feature remains on the cards, but the others should be arriving shortly, alongside some other things happening for Apple this week for Global Accessibility Awareness Day, which will also see Apple Fitness+ add a sign language feature, Apple Music will feature “Saylists” playlists which focus on a different sound for people practicing vocal sounds or in speech therapy, and the App Store will show more accessibility-focused apps and stories, as well.